Complete Guide to Generative Engine Optimization (GEO)

By Luca Pizzola, Founder of Oltre.ai | Published December 2025

Last updated: March 2026

Generative Engine Optimization (GEO) is the practice of structuring content and brand signals so AI systems like ChatGPT, Perplexity, Claude, Gemini, and Google’s AI products select, cite, and recommend you in generated answers. Unlike SEO, GEO optimizes for extractable answers, entity clarity, and third‑party validation—because many AI journeys end with a citation, not a click.

What Is Generative Engine Optimization (GEO) and Why Does It Matter in 2026?

Generative Engine Optimization (GEO) (optimization for AI-generated answers) is the discipline of making your content and brand citable when a generative engine uses Retrieval-Augmented Generation (RAG) (LLMs retrieving web sources before answering) to synthesize a response. The term was introduced by Princeton University researchers, who framed GEO as improving visibility inside answers produced by generative engines.

In 2026, GEO matters because AI systems cite only a small subset of sources per response, and that selection drives “default recommendations.” Google AI Overviews (Google’s generated summaries in Search) now appear in 50%+ of searches (as of March 2026, per the Google AI Overview ecosystem tracking summarized by Search Engine Land: Mastering generative engine optimization in 2026). And in SearchGPT, 87% of citations match Bing’s top organic results (Seer Interactive, cited in Search Engine Land, 2026: source).

AI systems are looking for direct, extractable answers. Lead with answers. Put the key information at the beginning of each section.

Five evidence-backed takeaways (2026):

- GEO complements SEO: SearchGPT citations align with Bing top results 87% of the time (Seer Interactive via Search Engine Land, 2026: source).

- Fan-out wins citations: AI engines break queries into sub-queries (“query fan-out”), and pages covering multiple sub-intents earn more selection opportunities (LLMrefs, 2026: source).

- Freshness is a ranking factor in AI retrieval: content older than 3 months sees fewer citations due to recency bias (LLMrefs, 2026: source).

- Structured evidence helps: pages with structured lists, quotes, and statistics saw 30–40% higher visibility in AI responses across 10,000 queries (LLMrefs, 2026: source).

- Platform behavior differs: Google AI products heavily cite YouTube and other multimodal sources; Perplexity heavily cites community sources like Reddit (ecosystem summaries referenced by Search Engine Land, 2026: source).

The rest of this guide shows how to win citations across platforms, build off-site authority, and measure GEO outcomes for ecommerce teams.

GEO vs. SEO: What Changes When Search Engines Generate the Answer?

GEO does not replace SEO. GEO extends SEO into AI-generated answer environments by optimizing for citation surfaces (answer capsules, tables, entity definitions) and for how engines retrieve sources during RAG. Traditional ranking still matters, but selection and attribution matter more when the “answer” is generated on-page in ChatGPT or Google AI Overviews.

| Dimension | Traditional SEO | GEO | Notes / sources |

|---|---|---|---|

| Primary goal | Rank links | Get cited in answers | Search Engine Land (2026) source |

| Result format | 10 blue links | Single synthesized response | Google AI Overviews behavior (2026) source |

| Success metric | Clicks / traffic | Citations / mentions / SOV | eMarketer framing (2026) source |

| Content structure | Keyword-targeted pages | Extractable sections + tables | LLMrefs (2026) source |

| Source preferences | Your domain + backlinks | Earned media + UGC + docs | Search Engine Land (2026) source |

| Freshness needs | Periodic updates | Frequent refresh cycles | “Older than 3 months” penalty (LLMrefs, 2026) source |

| Optimization tactics | On-page + links + tech SEO | Answer capsules + entity clarity | LLMrefs (2026) source |

Brands need both when discovery starts in AI but validation still happens on Google Search. For ecommerce, “best [product] for [use case]” queries often produce shortlist-style answers; GEO helps your SKU, category page, and brand get selected as a cited option. For deeper overlap, see our comprehensive comparison of GEO and SEO strategies.

How to Optimize for ChatGPT, Claude, Perplexity, Gemini, Google AI Overviews, and Google AI Mode

Each engine retrieves and cites differently, so one-page optimization is not enough. ChatGPT often reflects Bing’s top results, Claude draws from Brave Search, Perplexity rewards current-year freshness and community validation, and Google AI products reward semantic completeness plus multimodal signals like YouTube.

| Platform | Retrieval / index behavior | What it tends to cite | Most important on-page tactic |

|---|---|---|---|

| ChatGPT | Bing-influenced retrieval | Wikipedia + high-authority pages | Front-load answer capsules + clean headings |

| Claude | Brave Search (backend) | Diverse, well-sourced pages | Semantic HTML + balanced citations |

| Perplexity | Freshness-heavy retrieval | Reddit + recent publishers | Date every stat + 40–60 word chunks |

| Gemini | Google Search + Knowledge Graph | YouTube + trusted brands | Entity definitions + multimodal context |

| Google AI Overviews | Fan-out across sub-queries | Mixed sources + YouTube | Semantic completeness + comparisons |

| Google AI Mode | Encyclopedic, multi-link answers | Wikipedia + UGC + YouTube | Define entities inline + structured tables |

What to act on first: (1) add answer capsules and section-level direct answers for ChatGPT (see effective tactics to earn citations from ChatGPT); (2) implement a freshness cadence for Perplexity (see latest Perplexity SEO optimization techniques); (3) build Google-specific completeness for AI Overviews and AI Mode (see strategies to rank in Google AI Overviews and guidance on appearing in Google AI Mode results).

For the Google ecosystem, referencing an official product demo video (YouTube) and ensuring the page supports extractable definitions often improves selection likelihood because YouTube is disproportionately cited in Google’s AI surfaces (industry tracking summarized in Search Engine Land, 2026: source).

6 Proven Strategies to Increase AI Citations

Based on the original Princeton research and industry best practices, these strategies are proven to increase AI citation rates. The percentages represent visibility improvements observed in controlled studies.

| Tactic | Why it works for AI citation | Evidence / date |

|---|---|---|

| Statistics + data | Improves confidence and quotability | LLMrefs: +30–40% (2026) source |

| Expert quotations | Adds authority signals and attribution | LLMrefs: +30–40% (2026) source |

| Source citations | Signals verification and trust | Search Engine Land (2026) source |

| Answer capsules | Creates extractable “ready-to-cite” text | LLMrefs guidance (2026) source |

| AI-parsable structure | Improves chunking and retrieval | PRIME guide (2026) source |

| Freshness cadence | Recency bias in AI retrieval | LLMrefs “3 months” (2026) source |

1. Add Statistics and Data (+30-40% visibility)

Quantified information is easier for AI systems to cite. Include specific numbers, percentages, and data points throughout your content, and attach a date and source. LLMrefs reports pages with structured lists, quotes, and statistics show 30–40% higher visibility across 10,000 queries (LLMrefs, 2026: source).

Pro tip: Create original research when possible. Original data is highly citable and differentiates you from competitors summarizing the same third-party sources.

2. Include Expert Quotations (+30-40% visibility)

Named, attributable quotes increase perceived trust. Quotes from recognized experts boost credibility and citation rates because they provide an explicit authority anchor an LLM can repeat.

Treating GEO as a one-time content tweak is the biggest mistake you make. In reality, GEO demands the same ongoing discipline as SEO.

Quote industry experts by name and title. Use quotes to support key claims, not replace your analysis. Proper attribution matters, so always include clear source information.

3. Cite Your Sources (+30-40% visibility)

Citing authoritative sources makes your content more “verifiable” to retrieval systems. Paradoxically, citing other sources makes your content more likely to be cited because it signals diligence and reduces hallucination risk.

Link to authoritative sources for factual claims. Reference academic papers, industry reports, and government data. Use proper citation formatting consistently (see practical examples in Search Engine Land, 2026: source).

4. Write Answer Capsules (Highest correlation with ChatGPT citations)

An answer capsule is the fastest way to create a quotable snippet. An “answer capsule” is a 40–75 word summary that directly answers a question, placed near the beginning of content. This is the format most likely to be extracted and cited by AI.

Start articles with a clear, concise answer to the main question. Keep it to 40–75 words. Use clear, declarative statements without hedging. Write it as if it’s the exact text you want AI to quote.

5. Structure Content for AI Parsing

Structure increases extractability. AI systems parse structured content more easily than walls of text, especially when headings map to natural questions and sections stand alone.

Use clear H2/H3 heading hierarchy that describes content accurately. Format content in question-answer patterns where appropriate. Add tables for comparative information, since AI systems extract tables exceptionally well. Implement FAQPage schema (structured Q&A markup), Article schema (publisher + author metadata), and Organization schema (brand entity metadata). PRIME’s 2026 GEO guide highlights schema markup and machine-readable formatting as core enablers (source).

6. Prioritize Content Freshness

Recency is a selection signal in AI retrieval. Perplexity and similar engines heavily weight new or recently updated content. LLMrefs notes content older than 3 months tends to receive significantly fewer citations due to recency bias (LLMrefs, 2026: source).

Update existing high-performing content regularly. Include visible publication and update dates. Refresh statistics and references at least quarterly, and more often for competitive ecommerce categories.

Building Authority: Why Your Website Isn't Enough

Here's a critical insight most brands miss: your own website is not your best GEO asset. AI systems evaluate a brand entity across the web—reviews, community discussions, and earned media—not just a single URL on your domain.

In practice, generative engines trust third-party sources more than brand-owned content. Search Engine Land’s 2026 GEO coverage highlights that sites with review platform profiles like G2, Capterra, and Trustpilot have 3x higher citation chances (SE Ranking, Nov 2025, cited in Search Engine Land, 2026: source).

Where to build your presence: Reddit (community validation), Wikipedia (encyclopedic entity context), YouTube (video + transcripts), and industry publications (earned media). For ecommerce, review ecosystems and “best [product]” roundups often become the sources AI assistants reuse—so authority building directly affects product discovery. For category-specific playbooks, see applying GEO strategies in ecommerce contexts.

How to Measure GEO Success: Citations, Mentions, Share of Voice, and Revenue Signals

GEO measurement starts with citations, but it should end with business outcomes. A citation (a linked source in an AI answer) and a brand mention (unnlinked inclusion in an answer) can influence buyers even when the session is zero-click. eMarketer’s 2026 guidance frames GEO as a branding channel where visibility and recall matter alongside traffic (source).

For ecommerce teams, the practical ROI lens is: “Are AI assistants recommending the brand, the category page, and the hero products—and does that correlate with assisted conversions, branded search lift, or higher conversion rate from AI referrals?” For workflows and dashboards, see tools and methods for AI citation tracking.

| Metric | What to Track | How to Track |

|---|---|---|

| Brand mention frequency | How often your brand appears in AI responses | Manual testing of 10-15 target queries monthly |

| Citation rate | Percentage of relevant queries where you're cited | Document results across ChatGPT, Perplexity, Claude, Gemini |

| Share of voice | Your mentions vs. competitors for target queries | Compare competitor citation rates for same queries |

| Citation quality | Whether you're cited positively, as primary source | Analyze context and sentiment of mentions |

| AI referral traffic | Visits from chat.openai.com, perplexity.ai, claude.ai | Google Analytics 4 referral reports |

| Conversion rate from AI | Leads/demos from AI-sourced visits | Compare to traditional search (expect 2-4x higher) |

| Assisted conversions | Sales influenced by AI visits, later direct/paid purchase | GA4 attribution + cohort analysis |

| Branded search lift | Increase in brand queries after AI visibility improves | Google Search Console trends + query groups |

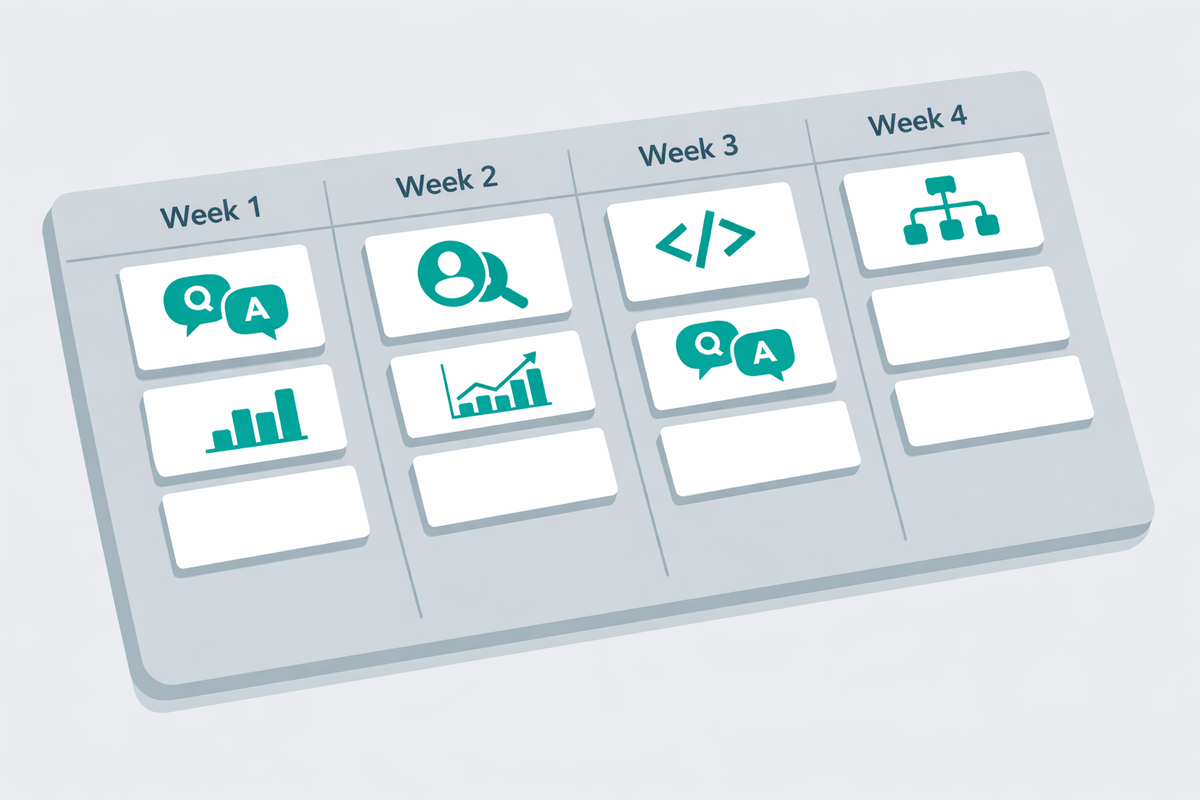

Your First 30 Days of GEO: A Practical Implementation Roadmap

A realistic first 30 days of GEO looks like: measure baseline visibility, fix technical extractability, optimize your best pages, then expand off-site authority. GEO compounds like SEO, but it only compounds if the basics (structure, freshness, source diversity) are in place.

| Week | Goal | Deliverable | Success checkpoint |

|---|---|---|---|

| Week 1 | Baseline visibility | Query set + citation log | 10–15 queries tested across engines |

| Week 2 | Technical foundation | Schema + crawlability fixes | FAQPage/Article/Organization validated |

| Week 3 | On-page upgrades | Answer capsules + refreshed stats | Top 5 pages rewritten for extraction |

| Week 4 | Off-site authority | Review + community + PR plan | 3–5 targets queued (Reddit, G2, press) |

Week 1

Audit Your Current Visibility

- ✓Test your brand name across ChatGPT, Perplexity, Claude, and Gemini

- ✓Document current citation rate for 10-15 key queries

- ✓Identify which competitors are being cited and analyze why

- ✓Assess your top content for GEO optimization gaps

Avoid this: testing only your brand name. Include “discovery” queries like “best [category] for [use case]” and “validation” queries like “is [brand] legit?” (Prefixbox, 2026: source).

Week 2

Fix Your Technical Foundation

- ✓Add FAQ schema to your highest-traffic pages

- ✓Implement Article and Organization schema

- ✓Ensure AI crawlers aren't blocked in robots.txt

- ✓Verify site speed and mobile responsiveness

Avoid this: vague headings like “Overview.” Use question-style H2s and keep sections self-contained so RAG chunking works reliably.

Week 3

Optimize Your Best Content

- ✓Add answer capsules (40-75 words) to your top 5 pages

- ✓Update all statistics and add source citations

- ✓Restructure headings for clear hierarchy

- ✓Add tables where you have comparative information

Avoid this: stale stats without dates. Perplexity-style engines reward current-year updates (LLMrefs, 2026: source).

Week 4

Expand Your Off-Site Presence

- ✓Identify 3-5 subreddits where your audience asks questions

- ✓Reach out for 2-3 guest posting opportunities

- ✓Update your profiles on G2, Capterra, or relevant review sites

- ✓Plan ongoing content calendar with GEO principles built in

Avoid this: relying only on brand-owned pages. Review profiles and community validation can materially increase citation odds (Search Engine Land, 2026: source).

Tooling note: Use Google Search Console (query coverage), a schema validator (markup QA), and a citation monitor like Oltre.ai (cross-engine tracking) to keep the loop tight.

FAQ: GEO Costs, Timelines, Tools, and Results

How long does GEO take to show results?

GEO usually shows early movement in 2–6 weeks for a focused set of queries, especially after updating top pages with answer capsules, dated statistics, and improved headings. Durable gains take 2–3 months because engines re-crawl, re-rank sources, and test different citations as freshness and authority signals change.

How much does GEO cost?

GEO costs range from near-zero (in-house updates to existing pages) to five figures per month for ongoing content, digital PR, and review/community programs. The biggest cost driver is cadence: because content older than about 3 months tends to earn fewer citations (LLMrefs, 2026), teams often budget for continuous refreshes.

What tools help with GEO?

GEO toolkits typically include a citation tracker (to monitor mentions across ChatGPT and Perplexity), Google Search Console (to map fan-out queries), a schema validator (FAQPage and Article markup), and a content QA workflow for dates, sources, and entity definitions. Ecommerce teams also add product feed and category-page auditing.

Can a small ecommerce team do GEO without a full content team?

Small teams can run GEO by prioritizing the top 10 revenue-driving categories and products, then adding answer capsules, comparison tables, and updated FAQs. Off-site authority can start with review profile hygiene (G2, Trustpilot) and a lightweight Reddit participation plan. Consistency matters more than volume.

Is GEO worth it if SEO is already working?

GEO is worth it when buyers increasingly start discovery in AI assistants, because strong SEO does not guarantee being cited in generated answers. GEO adds extractable structure and third-party validation so the brand appears in shortlists and “best for” recommendations. Many teams treat it as a branding and conversion-assist layer on top of SEO.

See Where You Stand in AI Search

Oltre.ai tracks your brand visibility across ChatGPT, Perplexity, Claude, and other AI platforms. See exactly where you're being cited, and where your competitors are winning.

Check your AI visibility for free